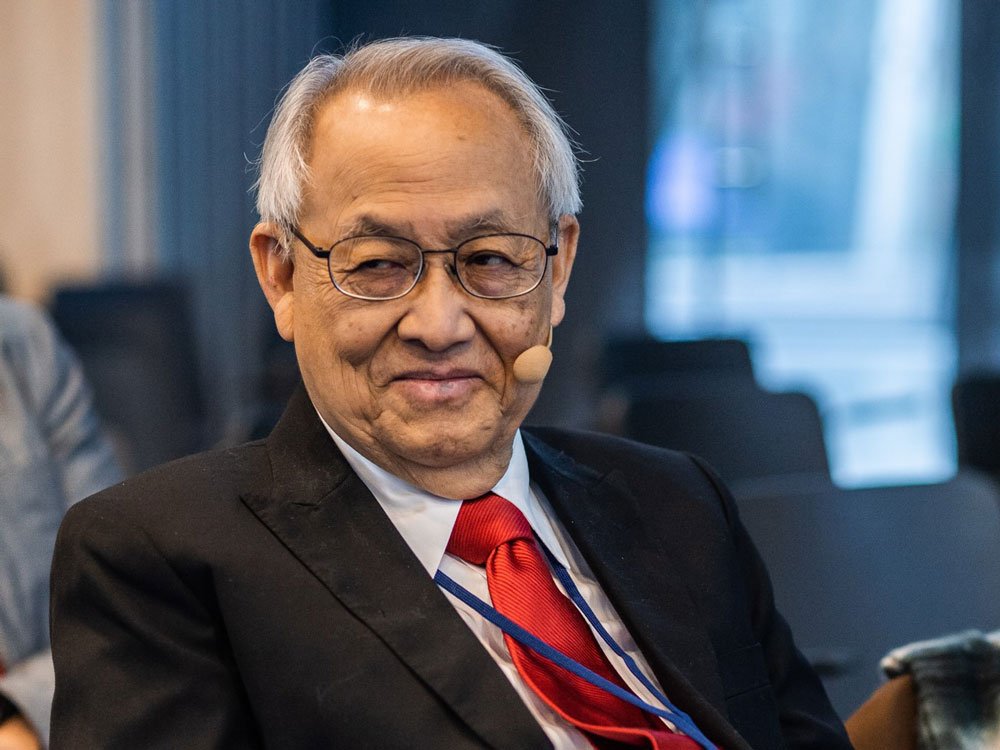

“Artificial intelligence is unprecedented in the history of civilization. Usually these things advance and we can say – yes, we understand it. This time it’s different. That’s why it’s not satisfactory from my perspective” says Professor Leon Chua, University of California, Berkeley, in conversation with Maciej Chojnowski

Maciej Chojnowski: You often draw an analogy between how nature and certain kinds of machines work. Do you think in today’s engineering this sort of inspiration from nature is important or is it just something accidental?

Prof. Leon Chua: It depends on the application. In general, engineers are very narrow. They specialize in a certain direction and they don’t know much more about anything else, right? That may be good, because they concentrate a lot, but it’s also a disadvantage, because they’re somewhat limited in their scope. They are the followers in a position where other people create and they just carry it out.

If you want to be creative and original, you got to get out of this bind, this cocoon. Even if you’re a very smart person but you live inside the cocoon, you still can’t create, can you? It all depends, if your objective is to do good for your job, profession, to be promoted – then it’s perfectly alright. But if you are ambitious and capable, it may hurt you, because you’re not going to create much. So it all depends on the personality.

You once said that you did not invent memristor but discovered it. Could you elaborate on that?

It’s very simple. There’s a difference between invention and discovery. Invention, typically, is limited. It’s good for a short period and becomes obsolete. Then other people invent a better one and you’re done.

Discovery is different. It is a fundamental law of nature. A very good example: you don’t say Columbus invented America. Everyone says that Columbus discovered America. Because America was already there. It’s a part of nature. So when I say I discovered memristor, it’s a concept, it’s a law of nature. It will never be obsolete.

So one can say that discovery belongs to science and invention to engineering, right?

That’s right, engineering is something that becomes superseded, although it may be very useful. But nature – nothing will ever supersede it. It’s always there, just like Newton’s law is always there or Einstein’s theory. Neither are wrong, it’s just a supplement. That’s discovery.

Why did it take almost forty years since you published your paper on memristor to actually build it by HPE?

Thirty seven years, to be exact. The reason is very simple: in the last forty years someone invented the MOS process technology. It’s a pretty dumb thing but it worked very well. And because Moore’s law essentially says every two years the number of transistors doubles and they are twice as powerful at half the cost, so it’s a way of making a lot of money. Everybody focused on that. You had to work hard, everybody was competing. And for 40 years it has been working like a charm.

So people wouldn’t pay attention, because they were busy trying to get ahead on this thing. And there was no need at that point. The memory devices available then were good enough. And if it was good enough then if you’d said „Hey, look at the memristor”, it wouldn’t be appreciated. And now the time has come when Moore’s law will end. In twenty years, people will begin to look what will replace that.

I think AI will make major useful achievements. Many diseases will be diagnosed, probably even better than by doctors. Because, once you have data, deep learning will work. I call them brute force, because first of all, nobody understand why it works, but it does

So that’s one reason. It was ahead of its time. That’s the best word. People are not ready. Even if you discovered it, people didn’t know how to use it.

The second reason was waiting for technology to be invented. Because this technology which makes memristors possible, is a very advanced nanotechnology. In actual fact, the Moore’s law makes it possible. So it’s a push-pull effect, right?

Could you explain the difference between deep learning network we know today and the cellular non-linear networks you created?

The cellular nonlinear network has a foundation, I had to develop a theory. It’s a rigorous science to create a cellular nonlinear network that’d be able to recognize various objects in the real world. Cellular nonlinear network that I invented with Tamas Roska is a systematic method to derive the coefficients decribing the system that allows you to do it. But with today’s artificial intelligence, especially deep learning, nobody knows how it works.

This is the black box problem, right?

Yes. It just works. But who knows why it works? That’s a big disadvantage. People can’t fully accept something they don’t understand. You wanna be able to demonstrate how it works in order to patent it.

Deep learning today can say it’ll work 95 percent of the time, and that’s good enough. If you got a strange form of cancer, you can actually teach deep learning to be able to diagnose that, even better than humans. There are tremendous advantages and numerous applications. That’s why everyone’s talking about it. But the great disadvantage is nobody knows how it works. And it isn’t good for legal purposes.

Anyway, it’s clear that AI, deep learning, will make an immense impact.

Youtube movie URL: https://youtu.be/iyYfAiZ9wrM?list=PLtS6YX0YOX4eAQ6IrOZSta3xjRXzpcXyi

Source: HPE Technology / YouTube

So why build brain-like computers?

Because the present computing system has limited capability and there are certain things our brain can do very well that even supercomputer cannot do. For example, you have a crowd of thousand people and your girlfriend happens to be there. If you see just part of her face, you can say – my girlfriend is there. A computer would never be able to do that, because it doesn’t have enough data. Our brains have certain ability no current computer can emulate.

Your brain burns only 25 watts, a little light bulb. If you take a Google go player, it takes megawatts. It means the whole small village electricity is needed to solve the problem.

What do you think about the future of AI? How do you think it will develop in the future?

I think it will make major useful achievements. Many diseases will be diagnosed, probably even better than by doctors. Because, once you have data, deep learning will work. I call them brute force, because first of all, nobody understand why it works, but it does. To do that you need a lot of data, a lot of examples, you keep feeding data to this dumb machine, whose principles of operation nobody knows, If you are patient enough and put in a lot of energy, then – eventually – you’ll find answers.

If you come up with a certain diagnosis of cancer, then that’ll be a great contribution, because even doctors can’t recognize them. It’ll be a major contribution to humanity. And there’ll be many examples like that. It’s so unprecedented in the history of civilization. Usually these things advance and we can say – yes, we understand it. This time it’s different. That’s why it’s not satisfactory from my perspective.

It’s very useful though, so it can solve a lot of human, societal problems. You’ll see an immense amount of advancements, good for mankind.

Prof. Leon Chua – widely known for his discovery of the Memristor in 1971, he is a professor in the electrical engineering and computer sciences department at the University of California, Berkeley. He has contributed to nonlinear circuit theory and cellular neural network theory. His research has been recognized internationally through numerous major awards, including 17 honorary doctorates from major universities in Europe and Japan. He was honored with many major prizes, including the IEEE Neural Networks Pioneer Award in 2000, the Guggenheim Fellow award in 2010, and the EU Marie Curie Fellow award in 2013.

We would like to thank the organizers of Supercomputing Frontiers Europe 2019 for their help in arranging the interview with Prof. Leon Chua.

Przeczytaj polską wersję tego tekstu TUTAJ

See also: Millennials are waking up. Lucie Greene in conversation with Maciej Chojnowski